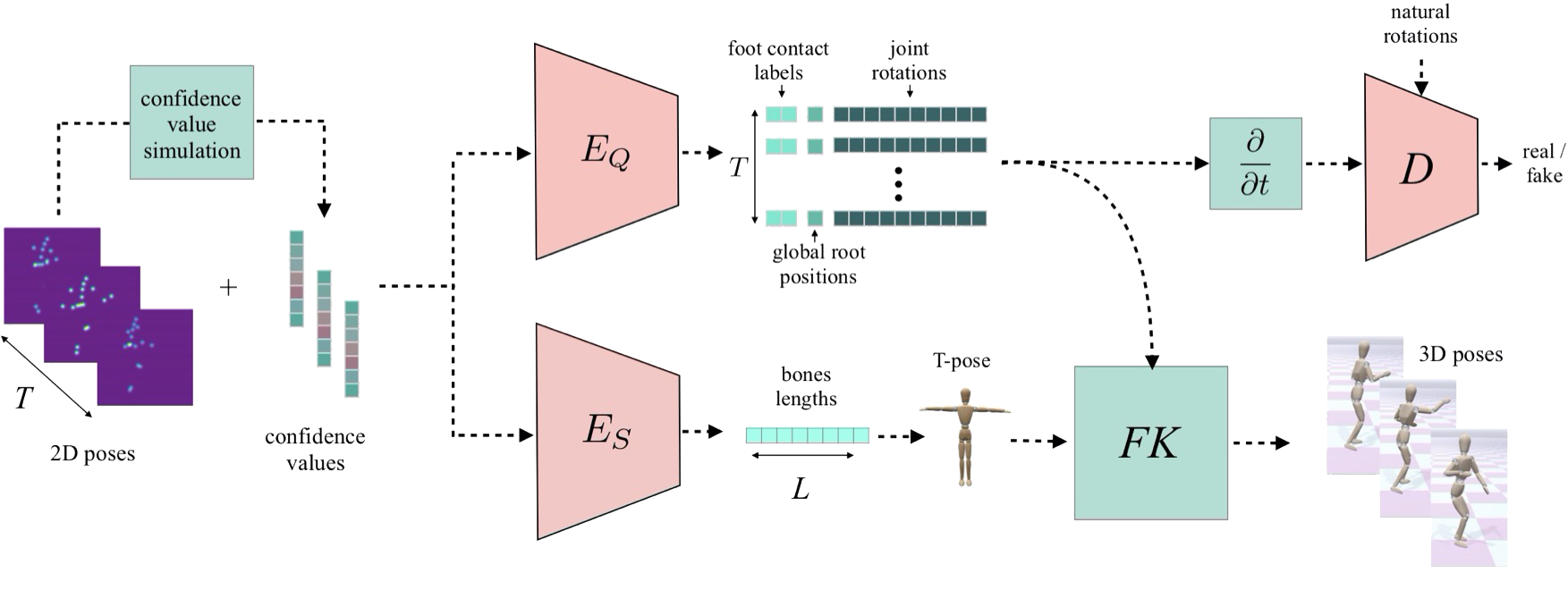

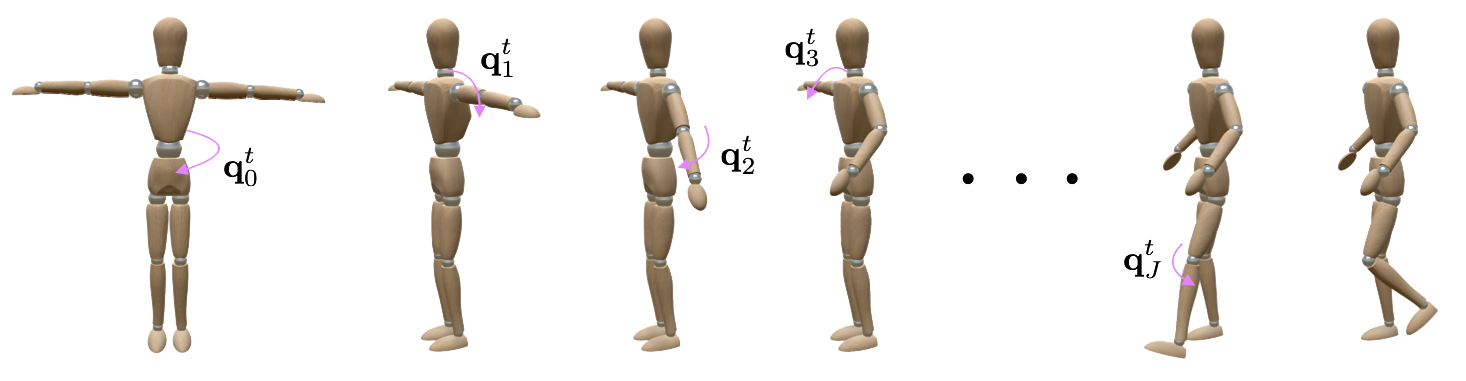

We introduce MotioNet, a deep neural network that directly reconstructs the motion of a 3D human skeleton from monocular video. While previous methods rely on either rigging or inverse kinematics (IK) to associate a consistent skeleton with temporally coherent joint rotations, our method is the first data-driven approach that directly outputs a kinematic skeleton, which is a complete, commonly used, motion representation. At the crux of our approach lies a deep neural network with embedded kinematic priors, which decomposes sequences of 2D joint positions into two separate attributes: a single, symmetric, skeleton, encoded by bone lengths, and a sequence of 3D joint rotations associated with global root positions and foot contact labels.

These attributes are fed into an integrated forward kinematics (FK) layer that outputs 3D positions, which are compared to a ground truth.

The key advantage of our approach is that it learns to infer natural joint rotations directly from the training data, rather than assuming an underlying model, or inferring them from joint positions using a data-agnostic IK solver.

MotioNet can reconstruct a variety of human motions, while being robust to partial occlusions and tolerant to different body types. Representative frames from videos (left) and their corresponding reconstructions (right):

Our results on videos in the wild, compared to three other methods:

Comparison of global root position reconstruction. The GT positions (first row) and the estimated (ours - second row, VNECT [2017] - third row):

@article{shi2020motionet,

title = {MotioNet: 3D Human Motion Reconstruction from Monocular Video with Skeleton Consistency},

author = {Shi, Mingyi and Aberman, Kfir and Aristidou, Andreas and Komura, Taku and Lischinski, Dani and Cohen-Or, Daniel and Chen, Baoquan},

journal = {ACM Transactions on Graphics (TOG)},

year = {2020},

doi = {10.1145/3407659},

publisher = {ACM}

}We thank the anonymous reviewers for their constructive comments. This work was supported in part by the National Key R\&D Program of China (2018YFB1403900, 2019YFF0302902), the Israel Science Foundation (grant no.~2366/16), and by the European Union's Horizon 2020 Research and Innovation Programme under Grant Agreement No 739578 and the Government of the Republic of Cyprus through the Directorate General for European Programmes, Coordination and Development.